By Seraina Kull

On the 10th of November, the Graduate Institute’s Technology and Security Initiative (TechSec) had the honour of hosting a panel discussion on lethal autonomous weapons systems (LAWS) and how they are regulated in the current international legal framework. The panelists included H.E. Amb. Marc Pecsteen, Reto Wollenmann, and The Graduate Institute’s own Prof. Andrew Clapham. PhD Student Tianze Zhang moderated the panel.

H.E. Amb. Pecsteen, as the current Chair of the Group of Governmental Experts (GGE), explained some challenges of regulating LAWS. The GGE, created in 2016, looks at whether emerging weapons systems are compatible with international humanitarian law (IHL) and has agreed on 11 guiding principles so far. This year, the GGE’s aim is to go beyond these guiding principles by formulating recommendations for a normative framework. For now, the majority of GGE participants, which include State parties to the Convention on Certain Conventional Weapons (CCW) as well as civil society actors, agree that in addition to IHL there should be a new (and distinct) legal instrument to address LAWS. However, some delegations do not agree with this approach and see new regulations as premature. Still, the GGE experiences growing convergence towards a dual track approach, which would prohibit the use of certain LAWS and regulate others to conform with IHL. Reto Wollenmann agreed with this approach adding that LAWS without any human control cannot be used in accordance with IHL and should therefore be completely banned. Distinguishing between levels of human involvement is also important to tackle issues of accountability. The concept of command responsibility is central to the prosecution of war crimes by the International Criminal Court. However, Prof. Clapham noted that under current law, an individual would not only need to be somewhere in the chain of command or involved in the programming of the weapon, but also in “effective control of the weapon at the time”, which is evidently not the case with fully autonomous weapons. Working groups should be concerned with updating laws on accountability, as the possibility of individual punishment (including asset freezes and travel bans) can deter war crimes.

Agreeing on how to regulate a complex issue involving Artificial Intelligence and human lives, however, is no easy task. Prof. Clapham described how LAWS can potentially process new information more quickly than humans and differentiate between military targets and civilians. However, he also raised the question of how moral it is for machines to do so. Even if LAWS get sophisticated enough to make decisions in accordance with IHL, is it moral for a machine to kill humans? According to the ‘just war’ theory, killing is only morally correct when someone’s life is being threatened. This would mean that machines may only kill when a human life is threatened. Further, even if LAWS get past all IHL objectives, it would still not be moral to kill when the only ‘life’ threatened is the machine’s, which leads to another moral consideration. Prof. Clapham argued that the use of LAWS “will make war more likely”. The countries that can afford LAWS could be more inclined to use lethal force when they can send machines into armed conflict instead of “risking the lives of their soldiers”. For instance, in accordance with the USA’s War Powers Act of 1973, the President must ask Congress for permission before inserting human beings into hostilities. Arguably, the use of LAWS would not require prior permission, and in the case of Libya, the US even argued that the use of drones did not constitute hostilities at all.

All speakers agreed that the discussion on regulating LAWS has come a long way. Seven years ago, the discussion was very broad, and it took some time for the LAWS debate to centre around IHL. Still, legal issues outside of IHL, technological expertise and political considerations continue to play an important role in advancing a new regulatory framework for this complex issue. While the GGE will report to the CCW Review Conference in December of this year. But the issue of appropriately regulating LAWS will concern generations to come.

Seraina Kull is a board member and content creator for the Technology and Security Initiative at the Graduate Institute. LinkedIn

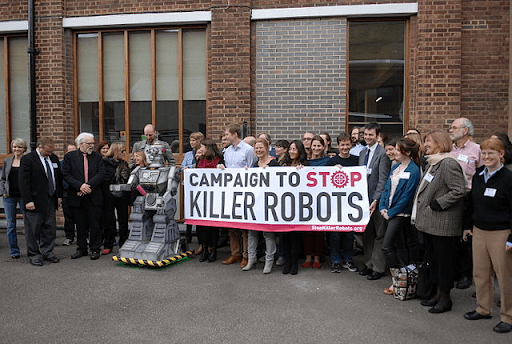

Image from: Wikimedia Commons

0 comments on “TechSec Panel: Lethal Autonomous Weapons and International Humanitarian Law”